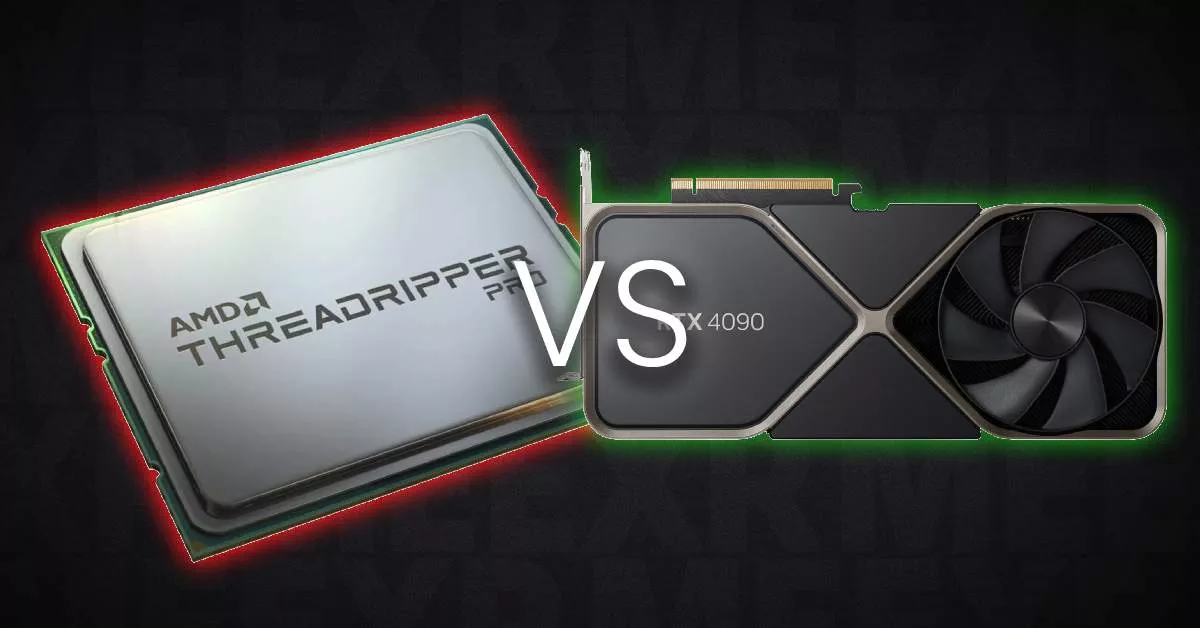

When it comes to 3D rendering, artists and professionals often find themselves wondering: CPU vs GPU Rendering? Both have their merits, but which one is the right tool for the job? This decision has a major impact on render speed, cost, and even the quality of your final image. With powerful hardware like the 9950X CPU and the RTX 5090 GPU on the market, it’s more important than ever to understand the strengths and weaknesses of each, especially if you’re aiming to push your rendering times and quality to new heights.

The Evolution of GPU Rendering

Not long ago, the answer to this debate was simple: CPUs were the go-to for rendering. GPUs were used for rasterized, real-time graphics (think games), which didn’t require the sophisticated light simulation we now expect in 3D rendering. But as GPU technology advanced, things started to change. The introduction of CUDA and OpenCL opened the door to GPU-based raytracing, enabling stunning realism at blazing speeds.

Back then, GPUs weren’t ready for prime time in rendering. They were held back by limited VRAM, poor support for essential features like render elements (z-depth, diffuse, light passes), and their lack of reliability for large scenes. But these limitations didn’t last long.

The Current State of GPU Rendering

Fast forward to today, and GPUs have matured into highly capable, feature-rich tools for rendering. Modern render engines are optimized to take full advantage of both RAM and VRAM, allowing you to load larger scenes than ever before. Render elements are supported, and many GPU renderers now offer the same quality as CPU-based solutions.

The best part? GPU rendering is fast. With GPUs like the RTX 4090 or RTX 5090, you can expect your rendering tasks to be completed in record time, freeing up your workflow to do more creative work.

When to Use CPU Rendering

Even though GPUs are the new powerhouse in 3D rendering, there are still cases where CPU rendering has its place. In particular, some rendering engines (like Arnold) use CPU rendering for certain tasks. This is because CPU rendering can handle tasks with extreme precision, which might be important when working with highly complex materials, intricate lighting setups, or other advanced features not yet supported on GPUs.

Arnold and CPU Rendering

For instance, Arnold a popular rendering engine still uses CPU rendering as its primary method for maximum feature support. If you rely on specific features or nodes that aren’t fully supported by GPU acceleration, CPU rendering is your best bet. However, as time goes on, Arnold and other engines are progressively improving GPU support, meaning this gap is getting smaller with each release.

Why GPU Rendering is a Game Changer

One of the biggest advantages of GPU rendering is the sheer speed it offers. For anyone looking to push render times down, GPUs are the clear winner. This is largely due to their architecture, which is optimized for parallel processing making them ideal for handling the complex calculations involved in 3D rendering. With GPUs, you can expect significant reductions in rendering times, which allows you to focus on other aspects of your creative work.

The Speed Factor: A Real-World Example

Imagine rendering a scene with complex lighting, materials, and textures. With a powerful GPU like the RTX 4090 or RTX 5090, you’ll see speeds that put even the best CPUs to shame. For example, a Threadripper 5995WX CPU (a top-of-the-line CPU with 64 cores) may take hours to complete a rendering job, while an RTX 5090 GPU can cut that time down to minutes. It’s clear why many professionals prefer using GPUs for large-scale rendering tasks.

Why Choose CPU Rendering: A Few Use Cases

While GPU rendering may be faster and more efficient for many tasks, there are still instances where CPU rendering might be necessary:

- Handling Large Scenes: If your scene is too large to fit into VRAM, CPU rendering might be your only option. While GPUs are fast, they’re limited by the amount of VRAM they have, whereas CPUs have access to much larger system RAM.

- Precision and Reliability: Some tasks may require high levels of accuracy, which can sometimes be better handled by CPUs. For example, certain types of noise reduction or precision modeling might benefit from CPU rendering.

- Old Engines or Features: Some older engines or niche features may still require CPU rendering, especially if GPU support is not fully realized.

The Bottom Line: Which Should You Choose?

In most cases, if you’re looking for speed and efficiency, a good GPU like the RTX 4090 or RTX 5090 will significantly outperform any CPU setup. However, if your scene requires more memory or specialized rendering features, CPU rendering may still be the way to go. Many modern render engines, such as Blender Cycles, Redshift, and Octane, allow you to choose between CPU and GPU rendering based on the needs of your project. This gives you the flexibility to select the optimal solution depending on your hardware and the complexity of your scene.

Final Thoughts: Striking the Right Balance

Ultimately, the choice between CPU and GPU rendering boils down to your specific project needs. For most 3D artists and studios, GPU rendering is the clear winner in terms of speed, cost, and ease of use. However, CPU rendering still has its place, especially when you need to rely on specialized features or are working with very large, complex scenes. No matter what, be sure to experiment with both to see which one works best for your workflow!

Related Reading

If you’re interested in gpu performance comparisons and specs, read our in-depth breakdown: RTX 5090 vs 4090 vs 3090 Graphics Card Comparison.

Or our rendering software top choices product visualizations post